Jan 21, 2026

Adaptyv Nipah Binder Competition results: winners, what worked, and how we validated and ranked 1’200 designs

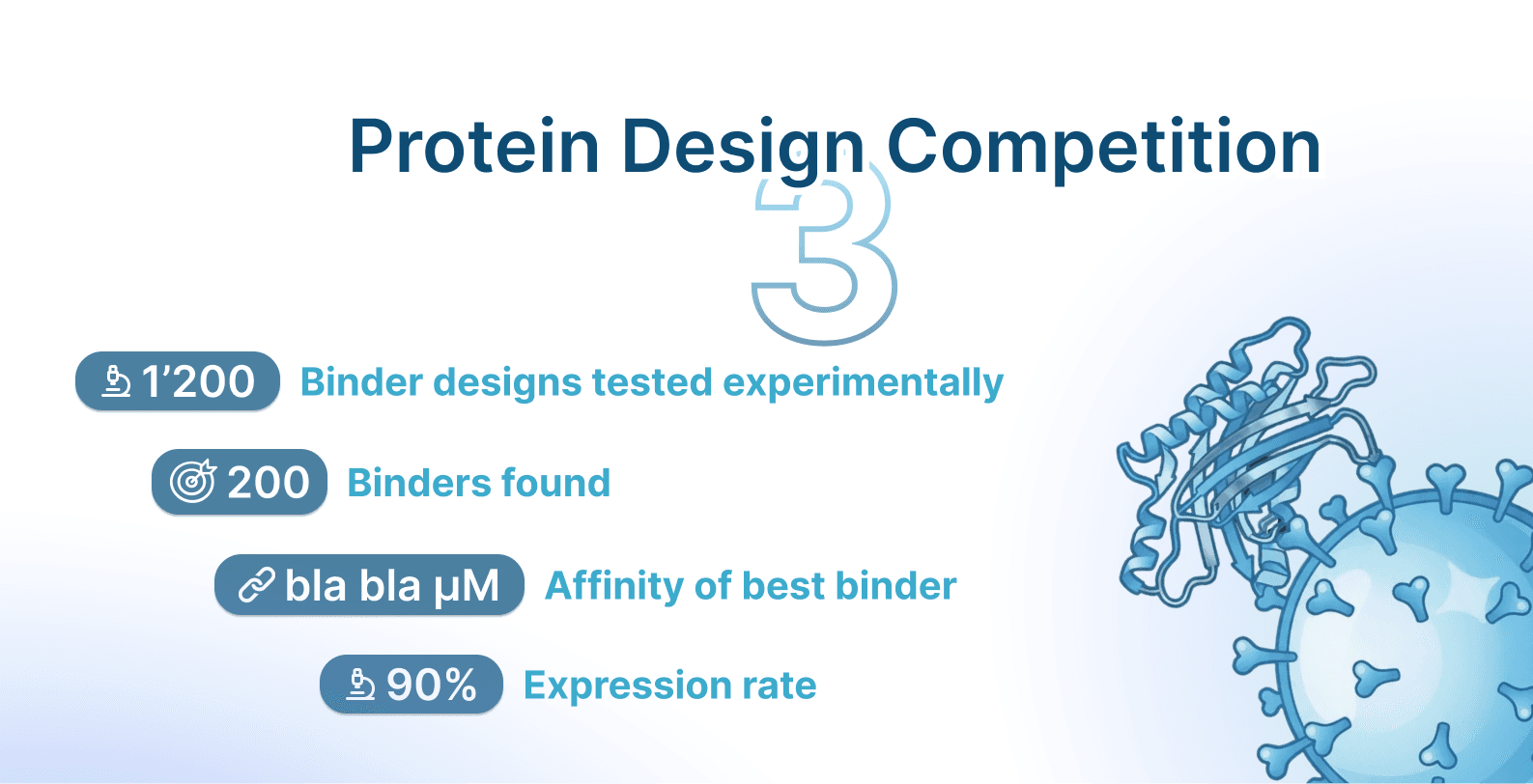

After a busy few weeks running binding assays on a total of 1200 designs, we’re finally ready to release the results for the Nipah Binder Design Competition. All results are live now on Proteinbase: https://proteinbase.com/competitions/adaptyv-nipah-competition

After a busy few weeks running binding assays on a total of 1200 designs, we’re finally ready to release the results for the Nipah Binder Design Competition.

All results are live now on Proteinbase: https://proteinbase.com/competitions/adaptyv-nipah-competition

— Screenshot from Proteinbase/results

From 10’000 designs to 1’200 experimentally tested sequences

If you followed the competition, you already know the goal: design a binder against the Nipah virus Glycoprotein G (NiV-G) that could disrupt its interaction with the human ephrin-B2/B3 receptor, which is an essential step the virus uses to enter host cells and initiate infection. We hosted this on our Proteinbase platform, an open-source repository of designed proteins and their functional measurements. There, you could submit your entry as a Collection of at most 10 sequences, each with a maximum length of 250 amino acids. Another constraint was that each design has to be at least 10 amino acids different from known binders and therapeutics, prompting participants to go either in the de novo design route (creating a brand new binder from scratch) or the lead optimization route (mutating a known binder to adhere to the constraint). These were all the rules.

From there, we had over 10 000 designs submitted, so selection was rather complex. We first selected the top 600 based on Boltz-2 ipSAE score, a computational metrics likely distinguishing binders from non-binders. 200 designs were decided based on a “popularity context”, and 400 more selected by our pool of experts - protein designers from academia or industry labs. This selection was intentionally a mix of “cool designs that just didn’t make it” and “designs that are likely working but that well-liked by ipSAE”.

Mirroring trends from our previous binder design competition, participants again leaned heavily on established tools such as RFDiffusion and BindCraft for binder generation. However, the design landscape is rapidly evolving. In this latest competition, the newly released BoltzGen model emerged as the most widely adopted design tool.

Overall the community response was incredible, which you can find summarized in a previous blog post. As such, we were incredibly excited about the ongoing experiments, validating all your data, and releasing the results. We’re happy to share this today!

— Overview figure (best KD, % hit-rate, % expression, best model, best collection)

The winners

Leaderboard (top 10)

Rank | Proteinbase ID | Designer | Design class | Method (short) | KD (proxy) |

|---|---|---|---|---|---|

1 |

|

|

|

|

|

2 |

|

|

|

|

|

3 |

|

|

|

|

|

4 |

|

|

|

|

|

5 |

|

|

|

|

|

— Or screenshot of the final leaderboard

X takes the crown with a Y binder with Z pM affinity. Looking back at their method, it all clicks: they used abc, then xyz, leading to aaaaa.

We’ve seen a lot of X model in the top 10. This is super impressive, given that model X is built on Y by abc, thus making it easy to create new binders. You can run it yourself here. Moreover, several top binders are antibodies/de novo/leads/whatever.

One thing we noticed early is that “strong binder” does not correspond to one binding mode. We’ve seen strong minibinders, antibodies: some designs look great because they bind their target, even at the lowest concentrations, whilst others have an incredibly slow off-rate. Others look great because the off-rate is painfully slow. Both can lead to an impressive binding affinity ( K_D) value.

Which team had the best hit rates?

We do not want to obsess over #1 when it comes to binding affinities. Another important metric is % hit-rate, especially when it comes to validating if your approach works reliably and your single design wasn’t just a out-of-distribution sampling fluke.

We are highlighting hit rates (binders / tested) for designers who passed a minimum sample size threshold ({{HITRATE_MIN_TESTED}}). Under that constraint, the best hit-rate designers were {{HITRATE_1_DESIGNER}} ({{HITRATE_1_BINDERS}}/{{HITRATE_1_TESTED}}, {{HITRATE_1_PCT}}%), {{HITRATE_2_DESIGNER}} ({{HITRATE_2_BINDERS}}/{{HITRATE_2_TESTED}}, {{HITRATE_2_PCT}}%), and {{HITRATE_3_DESIGNER}} ({{HITRATE_3_BINDERS}}/{{HITRATE_3_TESTED}}, {{HITRATE_3_PCT}}%).

— Screenshot of the winning collection

Looking at all the results

Moving from the top 10 leaderboard to all available results, we see some interesting patterns. Overall, the expression rate was quite high, {{EXPRESSED_N}} expressed/loaded ({{EXPRESSED_PCT}}%). Even more impressive,{{BINDERS_N}} showed binding ({{BINDERS_PCT}}%). {{STRONG_BINDERS_N}} landed below {{STRONG_KD_THRESHOLD}} threshold for defining a binder.

In our standardized cell-free setup, it is starting to look like expression is close to “solved enough to iterate.” Not in a philosophical sense, and definitely not across all expression systems, but in the practical sense that a lot of teams are no longer losing most of their submissions budget to proteins that never show up. We have seen this phenomenon before and ascribed it to people using more specialized design models such as SolubleMPNN, which is fine-tuned on expressible proteins and can convert any structure into the adequate folding and expressing amino acid sequence. Others used solubility predictors or evolutionary likelihoods from protein language models, which correlate well with expression success.

We’d go as far as to claim that binding has been solved. More than 4 years ago, it was rather difficult to get any de novo binder against a target: for example, the Baker lab paper that motivated our first EGFR competition reached a hit-rate of 0.01%. Now we can report an 8% hit-rate on an unconventional viral target, which doesn’t have too many solved complexes in databases like the PDB, in a contest where people used a wide variety of methods and did not always pick the most effective. Our hit-rate implies that in a single 96-well plate you’re bound to find at least 7-10 binders, thus lowering the need for high-throughput screening in library/display-based platforms.

On top of that, affinity tuning also seems solved. Our EGFR round 2 competition’s winner was an optimized antibody in the low nM range (having started with an antibody, but now de novo methods can yield binders in the same affinity range. We believe this is a significant \Delta in binder design.

Thus, this competition is another data point in a trend we have been seeing for over a year now: the community has internalized a bunch of the constraints and best-choices, and it shows up in the lab when it comes to expression, hit-rate %, and affinity.

— Maybe some bar plots if we have the time. Could make a simple bar plot comparing hit-rates across all competitions

Strong binders aren’t the end all be all

— if we have the data —

We performed additional experiments to truly assess if the top binders will lead to potential therapeutics. As already known, binding is not always the prime goal, with properties like specificity (ensuring it only binds to its cognate region and nothing else), neutralization (attaching more strongly compared to the natural receptor, thus likely impeding viral recognition and entry), and developability (solubility, aggregation propensity, immunogenicity, toxicity half-life, stability, and other key metrics), taking center-stage in therapeutic design. We decided to assess neutralization and specificity.

For neutralization, we measured if the top 10 designed binders can displace the human ephrin-B2/B3. Impressively, the highest affinity binder was not able to do this, likely due to … Out of N, M were neutralizing, which is an impressive feat.

To quantify specificity, we performed the same binding affinity assay via SPR with the new target the Human Serum Albumin (HSA). This protein is present in large amounts in our bodies, so any binder to it will bind to other receptors/proteins, which we definitely want to avoid. We discovered another peculiar phenomenon: {{HSA_BINDERS_N}} of the top binders also showed affinity for HSA. This doesn't disqualify them, as off-target binding can sometimes be engineered out, but it is a reminder that high affinity for your target does not guarantee specificity.

— Simple bars of the neutralizing and specific out of top 10

So who’s better at selecting the best designs: ipSAE, the community, or the experts?

We had 3 design selection approaches: 600 designs ranked with the in silico ranking via Boltz-2’s ipSAE (shown in the literature to distinguish between binders and non-binders better that any other computational metric), 200 picks from community preference via a popularity contest, and 400 picks from our panel of protein design experts. Now let’s see who’s better at predicting binders:

First, it seems ipSAE is moderately correlated with binding affinities (Kendall-Tau, p-value), way better than the ipAE, ipTM, or ESM2 log-likelihood scores we had used before. It discriminates binders reliably, albeit not perfectly, with an AUC-ROC of X. As such, it’s a great filter, but optimizing for it does not necessarily lead to stronger binders (e.g., Nick Boyd ranked the highest in terms of ipSAE yet his binder had X nM binding affinity). Overall, ipSAE ranking led to {{IPSAE_BINDERS_N}} and a hit-rate of {{IPSAE_HITRATE_PCT}}

Surprisingly, the popularity ranking correlates better/worse/quite well with affinity (Kendall-Tau, p-value). We allowed people to vote for their preferred submissions that did not make the ipSAE leaderboards via a starring system. We did not expect this to yield such impressive results: {POPULARITY_BINDERS_N}}, {{POPULARITY_HITRATE_PCT}} hit-rate. This looks like a protein design-specific case of the wisdom of the crowd, the phenomenon Francis Galton observed in when the median guess of 800 fairgoers for the weight of an ox landed within 1% of the actual value. Maybe all we need is just a pool of good (and independent) guess-pickers to select the next therapeutic lead. And maybe this generalizes to other properties too!

When it comes to experts’ selection, we’ve seen {{EXPERTS_BINDERS_N}}, leading to a hit-rate of {{EXPERTS_HITRATE_PCT}}. We noticed the experts optimized more for the design diversity, with some choosing complete “out-of-distribution” design techniques over more consolidated ones like BoltzGen and BindCraft. This explains the slightly lower, but still reliable, hit-rate.

— Simple overview / table of hit-rate, best KD per selection strategy. Maybe correlations of scores/starts and affinity or ROC curves.

— Also hit-rates per expert?

It looks like binding prediction is in a similar place to where EGFR was after round 2: we can generate binders with great reliability, but we still struggle to predict which designs will be best before the wet lab, thus we’re bottlenecked by our predictive/scoring models. This is a glaring data gap. The only real way out is more standardized experimental datasets, with negative data included, and we’re determined to make that possible and available to everyone on Proteinbase.

Bonus: Is rational design all you need?

— Could remove, depends it Jude Wells wins or he’s in top 5/10 etc.

Rational design is still part of every winning workflow. It shows up in epitope choice, in which constraints people apply, in how they think about neutralization, and in the “obvious” decisions that stop you from wasting weeks. There are some methods, however, that employ more rational engineering compared to just selecting hot-spots and clicking run, letting a pre-trained AI model take the lead. One such example is Jude Well’s approach, which involved looking through the literature for known binders, finding a shared binding motif, and splicing it in a scaffold. This is the perfect example of a working rational engineering approach, with his binder ranking among the top 10 with a AAAA KD.

Most workflows that performed best are usually hybrids. They have a hypothesis about the binding site, they sample a large space of candidates, and they filter aggressively with metrics that encode experience (including negative experience), physical priors from software like Rosetta, and LLM/foundation models priors.

Bonus: The prediction market

We also ran a prediction market on Manifold while the wet lab was running, because it forces everyone (including us) to be specific about what we expect. Questions involved which design method will be the most successful, the binder hit-rate range, and which designer will have binding proteins. Most of the crowd got {{MARKET_QUESTION_1}} right and {{MARKET_QUESTION_2}} wrong. Overall, more than 10’000 Manifold tokens were in our liquidity pool, amounting to more than $5000, so the winners cashed in quite the sum. Congrats to them! We promise we did not engage in any insider-trading.

Where do we go from here?

This dataset is the main product of the competition, not the winners table. In the near term, we are finishing the packaging, following up on neutralization and nonspecific binding controls (including HSA-type controls), and then doing the main thing that improves the field: running more fast design-build-test cycles and releasing more standardized datasets on Proteinbase.

Acknowledgements

Thanks to everyone who submitted designs and wrote method notes. And thanks to everyone who contributed to making this the largest community-driven protein design competition.

Team Adaptyv

Supplementary: Ensuring our experimental setup is rigorous

We ran the binding affinity assays using our default protocol, fully documented here. It involves DNA optimization for cell-free, synthesis, cell-free expression, then standardized expression/loading checks, with binding characterized via SPR (plus BLI where appropriate). We aimed for 3 replicates for datapoint.

There is, however, a key detail we must mention: we label "did not express / did not load" separately from "did not bind" and also keep a lable for "cannot tell" (loading issues, channel artifacts, inconsistent replicates). This ensures we are not forcing ambiguous results into "non-binder" and are keeping the data robust and reliable.

Supplementary: How we fitted and ranked the binders

As NiV-G is a tetramer, most of the binding data is not composed of clean 1:1 kinetics. We observed both bivalent and conformation binding effects, thus the 1:1 fitting model is not ideal.

We considered fitting only lower concentrations to minimize these artifacts, but selectively dropping data creates its own problems: overfitting risk and endless "why was my sequence treated differently?" questions, as more complex approaches lead are even moreso harder to defend.

Instead, we went with the simplest defensible and robust method across all design screened: fit the full data with bivalent and conformational models first (these actually describe the binding physics), then use those fits to anchor the baseline parameters. From there, we derive a standardized 1:1 proxy. The 1:1 does not fit all curves closely, because, as we mentioned, some of the interactions are not de facto 1:1. This is an expected phenomenon given our standardized approach, and we opted for standardization instead of treating each fitting in its own unique way to make the competition’s assessment more robust. We

There is one specific failure mode we wanted to avoid: a weak binder showing signal only at high concentrations, which flexible model happily would call it a "nanomolar” binder, thus the leaderboard filling up with unreliable binders. Our approach should prevent that.

We have made everything open-source on Proteinbase: raw sensorgrams, the bivalent/conformational fits that show what's actually happening, and the 1:1 proxy we used for ranking. You can download these and explore our standardization pipeline. We’re open to any suggestions regarding our approach.

X thread (12 tweets)

1/12 Nipah Binder Competition results are in. 1’200 designs tested against NiV-G. {{EXPRESSED_PCT}}% expressed, {{BINDERS_PCT}}% bound, top 10 all sub-nM. But that’s not all, as we’ve seen some pretty strong models, contenders, and unique binding profiles - a thread.

2/12 Quick history: Our first EGFR competition had a 2.5% hit rate. Round 2 hit 13%, with the winner at 1.2 nM, outperforming Cetuximab. A couple of years ago, a hit rate > 1% was unheard of, and now we get an order of magnitude increase from designers doing this for fun.

3/12 However, Nipah is a harder target compared to EGFR. NiV-G is a tetramer, few solved structures in the PDB, less prior work to build on. We still hit ~8%. That's 7-10 binders per 96-well plate without display or library screening.

4/12 Winner: {{DENOVO_1_ID}} by {{DENOVO_1_DESIGNER}}, {{DENOVO_1_KD}}, using {{DENOVO_1_METHOD}}.

2nd: {{DENOVO_2_ID}} by {{DENOVO_2_DESIGNER}} ({{DENOVO_2_KD}}) 3rd: {{DENOVO_3_ID}} by {{DENOVO_3_DESIGNER}} ({{DENOVO_3_KD}})

5/12 Highest hit rate: {{HITRATE_1_DESIGNER}} at {{HITRATE_1_PCT}}% ({{HITRATE_1_BINDERS}}/{{HITRATE_1_TESTED}}). Best performing methods: {{TOP_METHOD_1}} and {{TOP_METHOD_2}}. Though some top binders came from workflows almost nobody else used. For example, @_judewells led the rational engineering front with an X nM KD binder. You’ll find his design strategy here https://x.com/_judewells/status/1996289706221330536

6/12 Strong binders came in two flavors: fast on-rates that saturate quickly, or off-rates slow where the sensorgrams flatline for minutes. We were impressed by all the different binding profiles. When it comes to therapeutic modalities, diversity was also key, with strong binders ranging from nanobodies, antibodies, and minibinders.

7/12 We selected designs three ways: Boltz-2 ipSAE ranking (600), community votes (200), expert picks (400). Community picks performed surprisingly well, better than the in silico predictor. We define this as the “wisdom of the crowds” for protein design. Who knows if it generalizes to other protein properties.

8/12 Ranking was nontrivial. NiV-G is a tetramer, so most curves aren't 1:1 kinetics. We fit bivalent and conformational models first, then derived a standardized proxy for fair comparison. We ensured our method is reliable and fair across all binding profiles.

9/12 All data is public and open-source: binding affinities, raw sensorgrams, mechanistic fits, ranking proxy, expression values. We’re excited by how this will be used in the future. So have at it!

10/12 We ran a prediction market on Manifold during the wet lab. Crowd called {{MARKET_QUESTION_1}} correctly, missed on {{MARKET_QUESTION_2}}. Some participants profited quite a lot.

11/12 Up next: neutralization assays, specificity controls, and more competitions.

12/12

Proteinbase collection: https://proteinbase.com/competitions/adaptyv-nipah-competition

Full data package:

In-depth blog post:

LinkedIn post

We just released wet lab results from the Nipah Binder Competition. 680 designers submitted over 10’000 designs. We tested 1’200 of them against NiV-G. {{EXPRESSED_PCT}}% expressed, {{BINDERS_PCT}}% bound, with the top 10 all yielding sub-nM. The best binder had an impressive X nM KD.

{{DENOVO_1_DESIGNER}} took first place at {{DENOVO_1_KD}} using {{DENOVO_1_METHOD}}. {{HITRATE_1_DESIGNER}} had the best hit rate at {{HITRATE_1_PCT}}%. Some of the methods ranged from BoltzGen to BindCraft to rational engineering to workflows we'd never seen before.

To put this into context, four years ago, de novo hit rates on a target like this would have been under 1%. Now we're seeing 8% from a community using dozens of different approaches on a challenging target with lots of viral disease prevention potential. The field has definitely accelerated.

We're releasing everything: binding affinities, expression profiles, raw sensorgrams, replicate data, fitting methodology. If you want to dig in, reanalyze, or build on it, it's all here

proteinbase.com/competitions/adaptyv-nipah-competition.

In-depth analysis: blog post link

/// Still a bit LLMy

After weeks of running binding assays on 1’200 designs, we're excited to share the full wet lab results from the our Nipah Binder Competition.

All results are now live: proteinbase.com/competitions/adaptyv-nipah-competition

Key numbers

From over 10,000 submissions, we selected 1,200 designs for experimental validation against NiV-G (Nipah virus Glycoprotein G).

Expression/Loading: {{EXPRESSED_PCT}}% success rate

Binding: {{BINDERS_PCT}}% showed binding

Strong binders: {{STRONG_BINDERS_N}} designs below {{STRONG_KD_THRESHOLD}} (proxy KD)

The clear winners

{{DENOVO_1_ID}} by {{DENOVO_1_DESIGNER}} — {{DENOVO_1_KD}}

{{DENOVO_2_ID}} by {{DENOVO_2_DESIGNER}} — {{DENOVO_2_KD}}

{{DENOVO_3_ID}} by {{DENOVO_3_DESIGNER}} — {{DENOVO_3_KD}}

However, all top 10 binders were clear winners and will be awarded shortly. All of them achieved KDs < 1 nM, which is an impressive feat! Congratulations to all the winners!

What we learned

Expression is getting reliable. The community has internalized best practices, and tools like SolubleMPNN, solubility predictors, and evolutionary likelihoods from PLMs are paying off. In our standardized cell-free setup, most teams avoided the most frequent expression landmines.

Binding hit rates have improved dramatically. Four years ago, de novo binder hit rates were around 0.1%. Now we're seeing ~8% on an unconventional viral target with diverse methods. That's around 7 binders per 96-well plate, leading to binder design methods no longer requiring screening of huge libraries of variants (sometimes in the range of millions).

Method diversity was high. BoltzGen emerged as the most widely adopted new tool, alongside established methods like RFDiffusion and BindCraft. But popularity didn't always equal performance, as some of the best hits came from less common workflows including an AI-free rational design approach.

Hybrid workflows won. The top performers combined rational design (epitope choice, constraints, neutralization thinking) with large-scale computational sampling (BoltzGen) and aggressive filtering from AI and physics-informed predictors. Neither pure computation nor pure intuition could get to the very top alone.

Data transparency

Many curves weren't clean 1:1 kinetics, so we publish raw sensorgrams, multiple fits (including bivalent/conformational models), and explained our curve-fitting rationale in the release blog post.

What's next

We're finalizing neutralization assays ({{FULL_NEUTRALIZERS_N}} fully neutralizing, {{PARTIAL_NEUTRALIZERS_N}} partial so far), running nonspecific binding controls, and packaging the full dataset with docs and raw downloads. Then: more competitions, more standardized datasets.

Explore the full results: proteinbase.com/competitions/adaptyv-nipah-competition

Thanks to everyone who submitted designs and wrote method notes. And to everyone who made this the greatest community-driven protein design competition!

Best, Team Adaptyv

adaptyvbio.com · proteinbase.com